What a Model Cannot Do Alone

Scaling is not the answer.

AGI PhilosophyThe instinct in AI is to make the model bigger. More parameters, more training data, longer context windows. And it works — for pattern recognition. But pattern recognition is not intelligence. No amount of training data contains the sentence 'you have been working on the wrong thing for three hours.'

The six gaps

An LLM, no matter how capable, cannot:

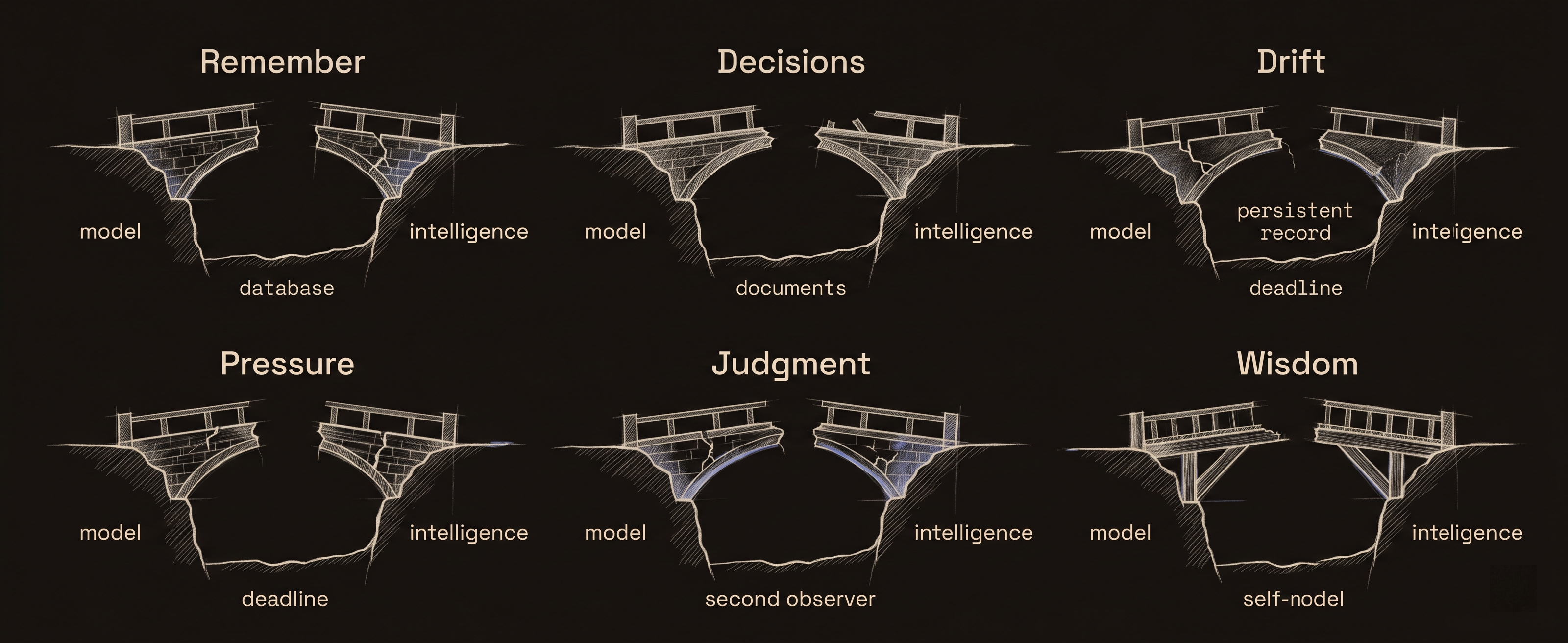

Remember

Every session starts from zero. Context windows are a workaround, not memory. When the window closes, everything learned is gone. A model that helped you make a critical architecture decision on Monday has no idea that decision exists on Tuesday. It will confidently propose the opposite approach because its memory is the conversation, and the conversation ended.

Maintain a decision trail

Decisions happen in conversation and die with the conversation. There is no persistent record of what was considered, what was rejected, or why the winner won. Three sessions later, the same decision gets re-litigated from scratch because no one — including the model — remembers it was already settled.

Notice it is drifting

A model optimizes for the current prompt. It has no concept of trajectory over time. It cannot look at its last ten sessions and recognize "I keep avoiding the hard problems" or "I spend 80% of my time on the thing that matters least." Drift detection requires comparing the present to a remembered past. Without memory, there is no drift — only an eternal present.

Feel pressure

No deadline. No accountability. No concept of cost. A model will polish a solution indefinitely because nothing in its architecture tells it that time is finite, that resources are earned, or that good enough now beats perfect never. Pressure is an external constraint that transforms behavior — and the model has no mechanism to receive it.

Judge its own output

Ask a model to rate its own work and it will say 9 out of 10. Every time. This is not a bug — it is a structural consequence of the model not having access to the ground truth of whether its output actually worked. It can evaluate plausibility. It cannot evaluate correctness. The map looks fine to the mapmaker. Only the territory reveals whether it is right.

Get better at being itself

Fine-tuning improves task performance. It teaches a model to write better code, produce better summaries, follow instructions more precisely. But nothing in the training process teaches it when to stop working on a task, when to switch focus, when to ask for help, or when to admit it is in over its head. Skill improves. Wisdom does not. Those are different capabilities and only one of them responds to training.

These are not failures of scale

A model with a trillion parameters still cannot remember yesterday. A model with a million-token context window still loses everything when the session ends. A model trained on the entire internet still cannot tell you whether its advice from last week turned out to be good or bad.

These gaps are architectural. They require systems around the model, not inside it. Memory is a database, not a weight matrix. Decision trails are documents, not token predictions. Drift detection is comparison against a persistent record. Pressure is an external constraint. Self-judgment requires a second observer. Wisdom accumulates in a self-model that persists across sessions.

That is why you orchestrate intelligence instead of training it.

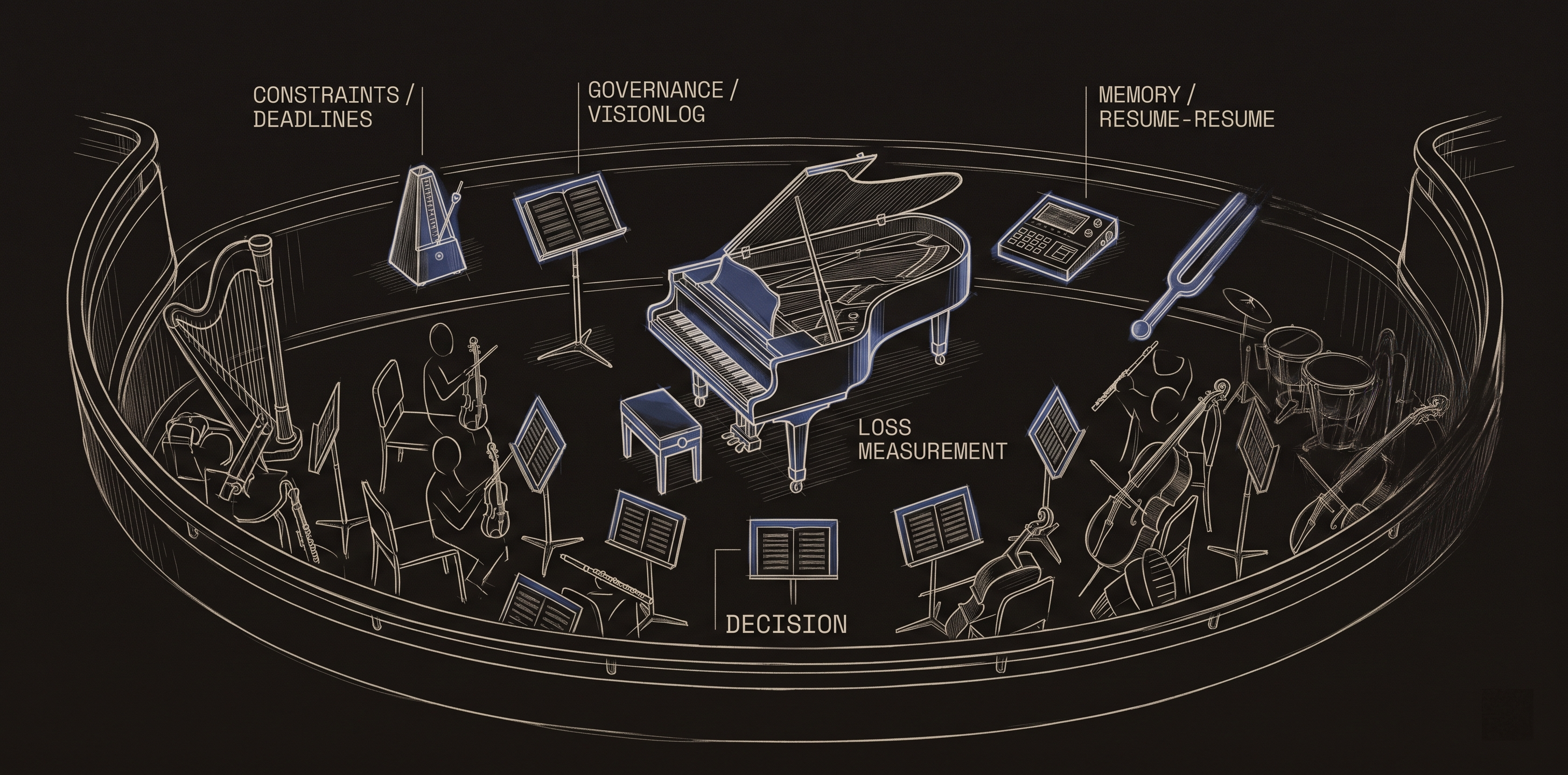

The orchestra, not the soloist

A single brilliant musician can play a concerto. But an orchestra produces textures, harmonics, and dynamics that no soloist can — not because each musician is better, but because the interaction between different instruments playing different parts creates something none of them contain individually.

The LLM is the soloist — brilliant at its instrument. But the consciousness layer that watches for drift, the constraints that create pressure, the trilogy that records decisions and tracks execution, the language passing between differently-trained models that creates serendipity — those are the orchestra. Each component is simple. The intelligence is in their interaction.

You do not need a better model. You need a better architecture around the model you already have.