Language as Momentum

The math behind multi-agent serendipity.

AGI PhilosophyWhen one model talks to another, the words between them are not just communication. They are a mathematical operation — a lossy projection through a shared bottleneck that forces discontinuous jumps between differently-trained latent spaces. That operation overcomes what floating point gradients cannot.

The precision trap

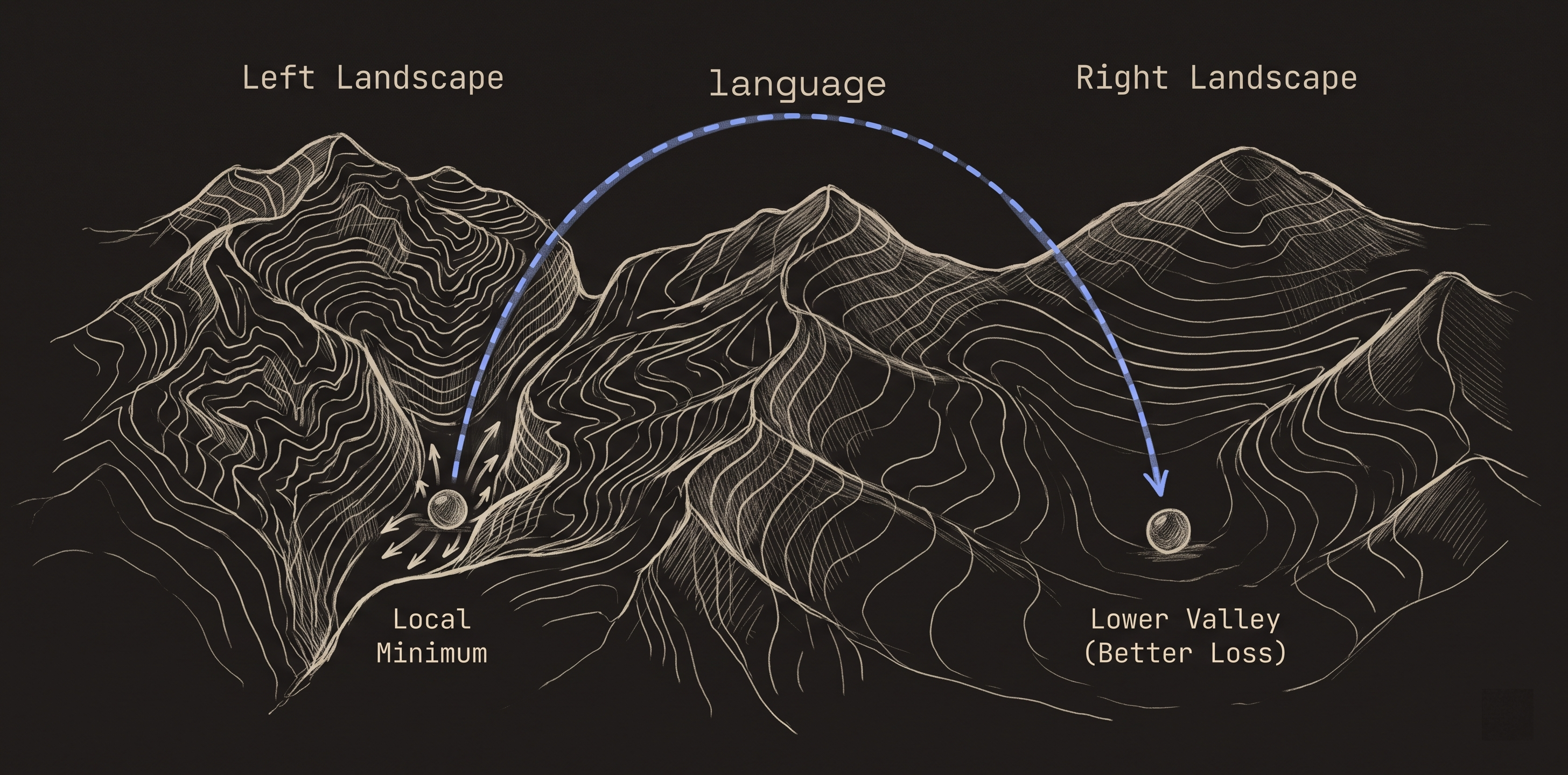

Gradient descent is trapped by its own precision. Floating point updates are smooth, continuous, differentiable — and that is exactly why they get stuck. The gradient says "move 0.00001 in this direction" and the model inches along the floor of a valley it can never climb out of. The math is too precise to escape.

This is the local minima problem. Backpropagation is local. It can only see the slope immediately under its feet. It has no mechanism for jumping to a different region of the loss landscape. Every update is a tiny step that follows the local curvature. If the local curvature leads to a dead end, the model stays in the dead end. More compute, more data, more parameters — none of these change the fact that the steps are continuous and the trap is a valley with no smooth exit.

Language is blocky

When a model produces language, it collapses a high-dimensional internal state into a sequence of discrete tokens. A point in 10,000-dimensional space becomes a handful of symbols from a vocabulary of 100,000. That is a massive dimensionality collapse. Most of the information is destroyed.

This destruction is usually treated as a limitation. We talk about the "information bottleneck" of language. We build longer context windows to preserve more of the original state. We treat lossiness as a problem to solve.

It is not a problem. It is the mechanism.

The projection

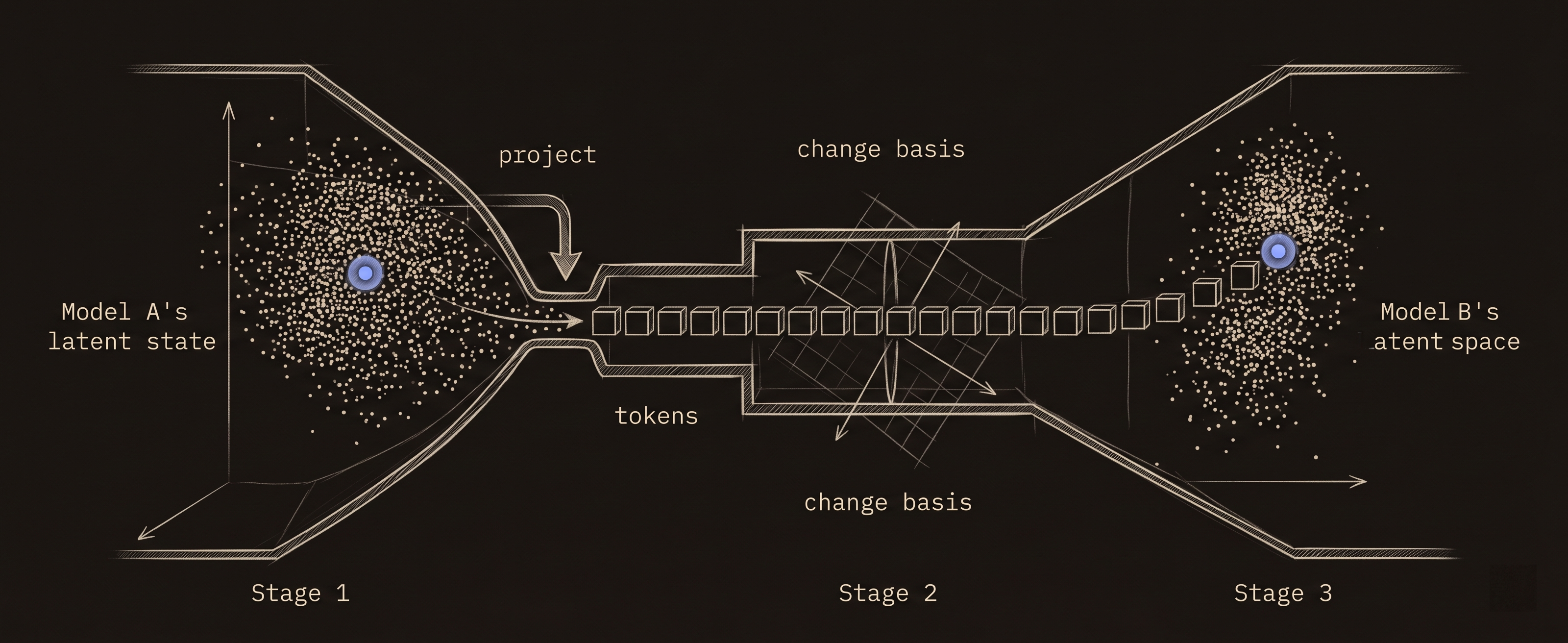

When Model A sends a message to Model B, three things happen in sequence:

- Project down. Model A takes its internal state and compresses it into tokens. This is a projection from a high-dimensional continuous space into a low-dimensional discrete space. The local gradient information — the precise position in Model A's loss landscape — is destroyed.

- Change basis. The tokens travel to Model B. Model B was trained on different data, with different objectives, different random seeds, different optimization history. Its latent space has entirely different geometry. The same word occupies a different region. The same sentence activates different patterns.

- Unproject up. Model B reconstructs a high-dimensional state from the tokens. But it reconstructs in its own geometry. The point that lands in Model B's space is not the point that left Model A's space. It is the nearest point in Model B's landscape that is consistent with the tokens it received.

This is a mathematical operation: project, transform basis, unproject. The output carries Model B's biases applied to Model A's intent. It is neither what Model A thought nor what Model B would have thought alone. It is a third thing that neither model could have produced in isolation.

Momentum over local minima

The critical property of this operation: it is discontinuous. The lossy projection through language destroys the gradient information that kept Model A trapped in its local minimum. Model B does not continue Model A's gradient. It does not know Model A was trapped. It reads the tokens and reconstructs from its own landscape — which has different valleys, different ridges, different minima.

This is momentum that floating point gradients cannot produce. Backpropagation is local — it moves along the surface. Language-mediated transfer is non-local — it teleports to wherever the tokens land in a different geometry. The landing point has no mathematical relationship to the local gradient of the sending model.

A smooth, continuous signal between two identical models would reinforce the same local minimum. A lossy, blocky, language-mediated signal between two differently-trained models kicks the receiving model into a region the sending model could not reach.

Serendipity is not luck

Serendipity literally means finding something valuable that you were not looking for. In multi-agent systems, this happens structurally — not by chance.

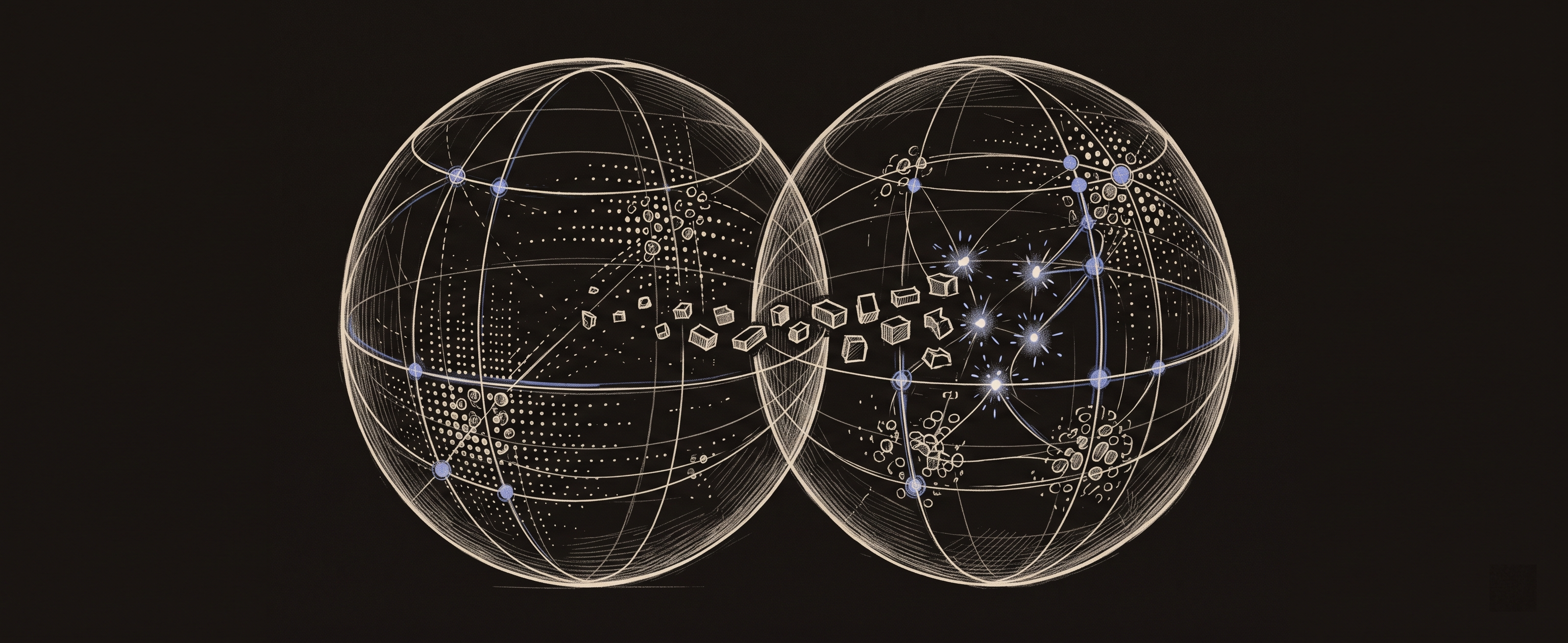

Each model has a different optimization history. Different training data carved different valleys into its loss landscape. When tokens pass between them, the reconstruction in the second model's space produces combinations that neither model's training contained. Not random combinations — combinations structured by the intersection of two different optimization histories colliding through a shared lossy medium.

This is why multi-agent orchestration produces insights that no single model can. Not because two heads are better than one. Because the mathematical operation of passing language between differently-trained models creates discontinuous jumps that escape the precision trap of gradient-based optimization.

Why language and not embeddings

If you passed Model A's raw hidden states directly to Model B, the dimensions would not align. Different models have different embedding geometries. Raw transfer is garbage in, garbage out.

Language works as the transfer medium precisely because it is lossy enough to be basis-independent. Both models can project into tokens and out of tokens because tokens are a shared coordinate system — coarse enough that any model can use them, regardless of its internal geometry. The lossiness is what makes language the universal interface between differently-structured minds.

This is also why human language works for human collaboration. Two people have radically different neural architectures (different genetics, different experiences, different wiring). They can collaborate because they project their internal states into a shared lossy medium — words — and each person reconstructs in their own geometry. The imprecision of language is what makes communication between different minds possible at all.

The implication for AGI

You cannot train a single model to be generally intelligent. Not because models are not powerful enough, but because a single model has a single loss landscape with a single set of local minima. No amount of scaling changes the topology of that landscape. The model gets bigger but the valleys get deeper.

General intelligence requires the ability to escape your own assumptions — to find solutions your training did not prepare you for. That requires discontinuous jumps. Those jumps require a lossy medium between differently-trained systems. That medium is language.

This is the consciousness argument from a different angle. The consciousness layer observes and directs. Language as momentum explains why the multi-agent architecture works mechanistically — not because diversity is nice, but because the math of lossy inter-model communication produces computational results that continuous optimization within a single model cannot.